Survey: How 132 Engineers at Anthropic Use Claude

AI assistants are deeply reshaping the way engineers work. A recent survey shows that Claude has entered the daily workflows of 60% of engineers, taking over low-value tasks like debugging and code comprehension, and crucially, opening up a space for 27% of tasks that they previously wouldn’t have undertaken. As the AI execution chain lengthens from 9.8 steps to 21.2 steps, human oversight rounds decrease by 32%. This new human-AI collaboration model is fostering a core capability of “task breakdown + AI delegation + result verification,” prompting profound reflections on technological capability gaps and career path advancements.

Anthropic surveyed its 132 engineers on how they use Claude in their daily work, quantifying their usage frequency, experiences, and self-assessed productivity across different tasks. They also sampled and analyzed 200,000 Claude Code conversation logs to see where engineers are truly applying AI, the complexity of those tasks, and how much human intervention is required.

01 Not Just Time Savings, But Getting More Done

1. Claude has entered 60% of workflows

In the survey, Anthropic engineers reported:

- A year ago, only 28% of their work involved Claude, with a self-reported efficiency gain of about 20%;

- This year, the same individuals are using Claude in 59% of their work, with self-reported efficiency gains around 50%;

- About 14% of heavy users believe their output has more than doubled.

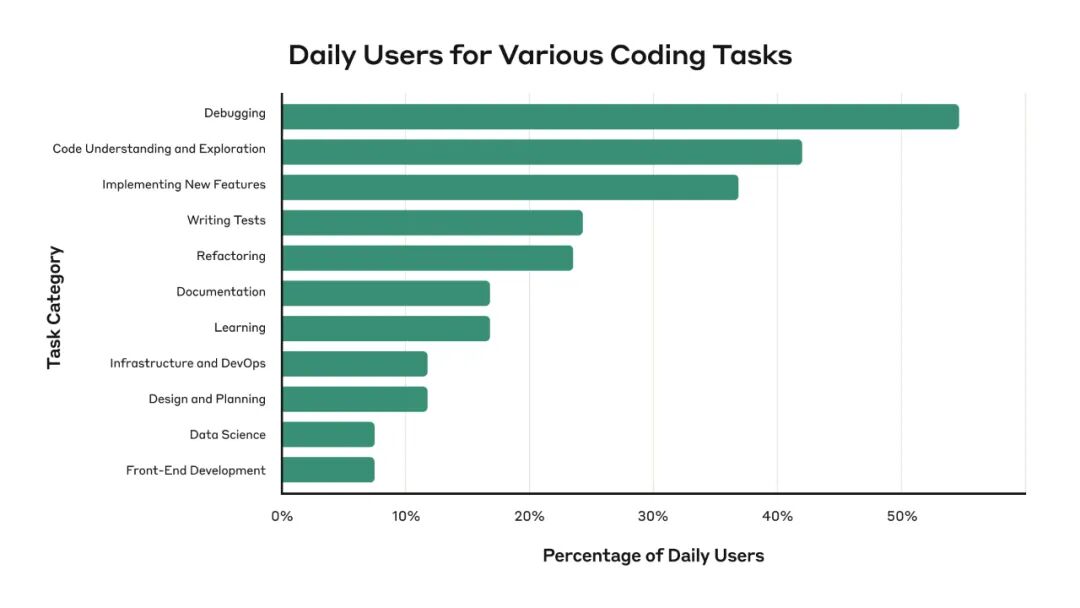

Interestingly, the most common usage scenarios are not for writing new features, but for debugging and understanding old code:

- 55% use Claude daily to check for bugs;

- 42% use it daily to understand code;

- 37% use it daily to implement new features.

This indicates that AI is first taking over low-value but necessary tasks, rather than “building rockets.”

2. 27% of work was previously unattempted

One noteworthy statistic is:

Employees believe that 27% of the work they accomplish with Claude is something they would not have done otherwise.

These “from nothing to something” tasks include documentation, testing, refactoring that were previously deemed too tedious; minor experience optimization tools that don’t affect KPIs; exploratory projects and additional experiments.

This directly explains why many teams feel they are not significantly less busy, yet they are accomplishing more.

While time savings are slight, the key point is that AI has brought those tasks that were “always at the bottom of the to-do list” onto the agenda.

3. Not “fully handing over to AI,” but “high-frequency collaboration”

Despite frequent use, over half of the engineers believe that the work they can “completely hand over to Claude without checking” is only 0-20%. This is also confirmed by behavioral data:

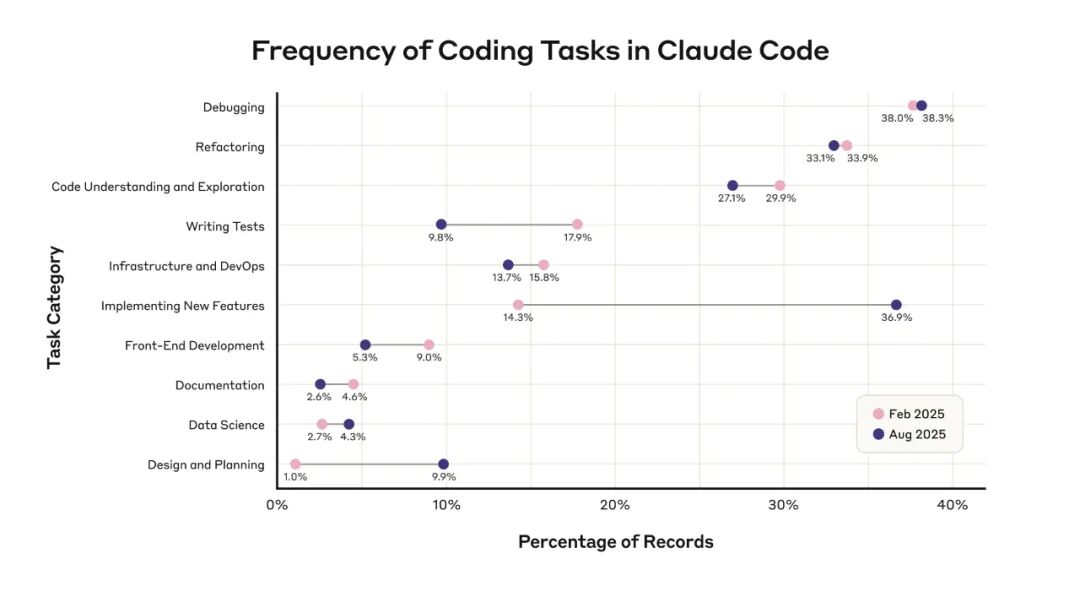

Over six months, the average complexity of tasks in Claude Code increased from 3.2 to 3.8 (out of 5), while the number of successive automated tool calls per task rose from 9.8 to 21.2, and the frequency of human interventions dropped from 6.2 to 4.1.

In simple terms: the AI is taking on longer chains of tasks, while human involvement is decreasing, but we are far from a situation where “no human is needed.”

02 Behavioral Patterns: The Real Difference Lies in AI Usage

From interviews and logs, a clear set of AI usage guidelines emerges, which essentially represents the core skills of future engineers.

- What tasks do engineers delegate to AI? Engineers generally hand Claude tasks that are:

- Low background + low complexity: tasks they are unfamiliar with but are not difficult, such as simple Linux commands or Git operations;

- Easy to verify: tasks where the results can be quickly assessed, like format conversions, small tools, or simple SQL;

- Divisible into independent modules: tasks where a sub-module is loosely coupled with the main system, so mistakes won’t collapse the entire system;

- Low quality requirements: one-off debugging scripts or code for research;

- Tedious and repetitive tasks they prefer not to do: refactoring, documentation, chart creation, etc.

An interesting observation is: “If a task can be completed in 10 minutes by oneself, many people are reluctant to open Claude.” This boundary essentially represents the startup cost of invoking AI.

2. What tasks do engineers retain? Most people keep these tasks for themselves:

- Key designs at the product and architecture level;

- Decisions that involve organizational culture attributes and trade-offs;

- Work related to “taste” or “style,” such as interaction details;

- Any tasks where “the cost of error is high.”

However, this boundary is not stable; as model capabilities improve, the range of tasks that can be delegated to AI is continually expanding.

This is also evident in the logs: the proportion of using Claude for new features and code design/planning has almost tripled over six months.

3. Capability structure is changing: more “full-stack,” but also a “supervision paradox.”

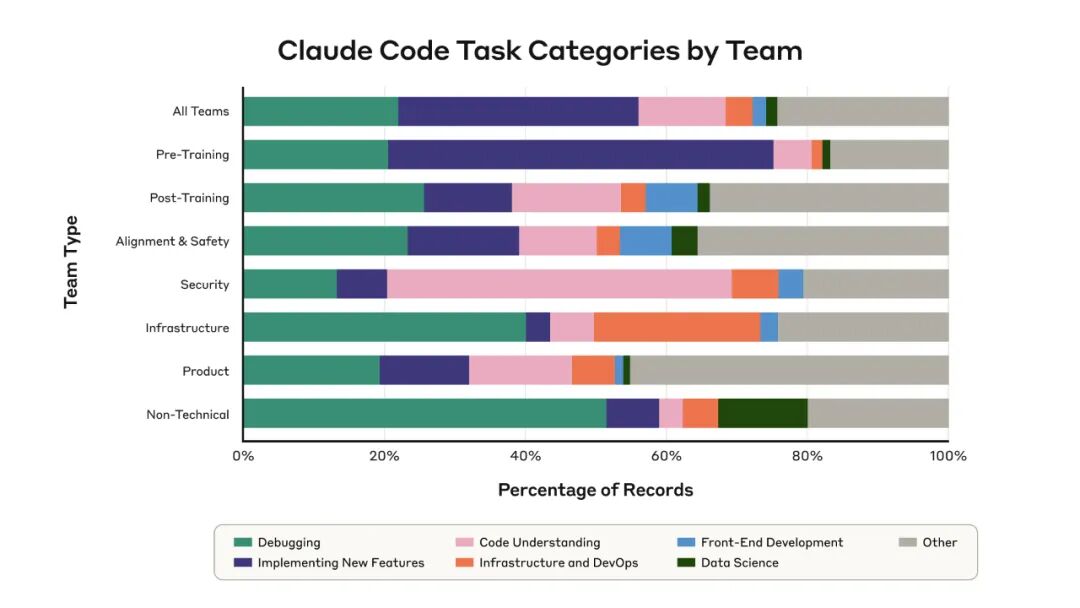

AI is clearly making engineers more full-stack:

- Backend engineers are tackling frontend and data visualization tasks;

- Security teams can quickly analyze risks in unfamiliar modules;

- Non-technical roles can also solve network and scripting issues with Claude Code.

However, many are beginning to worry: as more implementations are handed over to AI, the opportunities to write code themselves decrease, and will they still have the ability to understand the code written by AI in the future?

The report describes this well as the supervision paradox:

Effective use of AI requires the ability to supervise it; However, over-reliance on AI can lead to a gradual loss of that ability.

Some engineers are consciously going against this trend: even though they know Claude can handle it, they occasionally insist on writing it themselves to retain that part of their “muscle memory.”

03 What Three Cognitive Upgrades Can We Gain?

Insight 1: AI efficiency gain ≠ doing existing tasks 50% faster, but also tackling that “27% no one was doing”

From the data, Anthropic’s engineers did not spend the saved time slacking off but filled it with new tasks: more experiments, more refactoring, and more exploratory work.

For any company, this means:

- If you only use AI to compress existing work hours, you might gain 20-30%;

- But if you use AI to tackle that 27% of tasks that were previously untouched, such as experience optimization and quality improvement, the marginal value will be higher.

For managers: The right question is not “How much time can we save on this project with AI?” but “What new tasks can we take on that we previously never did with AI?”

Insight 2: The true core competency is “task breakdown + AI delegation + result verification”

From this report, those heavy users with doubled productivity share three common traits:

- They can break down tasks, turning big problems into a series of small modules that AI can easily handle;

- They can select tasks, only delegating easily verifiable and controllable parts to AI;

- They can verify results, knowing when to reimplement themselves and when sampling checks are sufficient.

These three aspects essentially combine product thinking + technical judgment + risk control, rather than simply knowing how to write prompts.

The future competitiveness of engineers may not lie in how many lines of code they can write in an hour, but in how many AI tasks they can orchestrate in an hour while ensuring nothing goes wrong.

Insight 3: Career paths are shifting upward; low-level skills may be skipped altogether

Previously, learning programming followed a relatively standard path: starting from writing basic syntax and data structures and gradually moving to higher abstractions.

Now, newcomers may very well start directly from using AI to write code. This will lead to two outcomes:

- The value of foundational skills is being re-priced: not everyone needs to write low-level code, but there must be some who can understand and modify it; they will become the truly scarce “reviewers” in the AI era.

- Promotion channels are shifting from “doing a lot” to “managing well”: many engineers are beginning to define themselves as managers of 1/5/100 Claudes, rather than as senior coders.

In conclusion, the results of this survey both meet my expectations and exceed my imagination. However, I would like to offer the following suggestions:

If you are developing AI tools, consider asking yourself three questions:

- Can you help users solve that 27% of tasks that were previously unattempted?

- Can you integrate AI into their real CI/CD, monitoring, and knowledge bases, rather than just a webpage?

- Can you help managers see clearly what tasks AI has accomplished and what risks it has taken on?

For enterprises, the more critical questions are no longer whether to adopt AI, but:

- What tasks are you prepared to delegate to AI?

- Who will supervise these AIs?

- As the learning paths, collaboration dynamics, and career expectations of teams are rewritten, do you have new organizational designs and talent strategies in place?

Wishing you a great day!

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.